Pre-training for Speech Translation: CTC Meets Optimal Transport

International Conference on Machine Learning (ICML), 2023

Oral presentation

PDF Code Poster Slides Video Publisher

Abstract

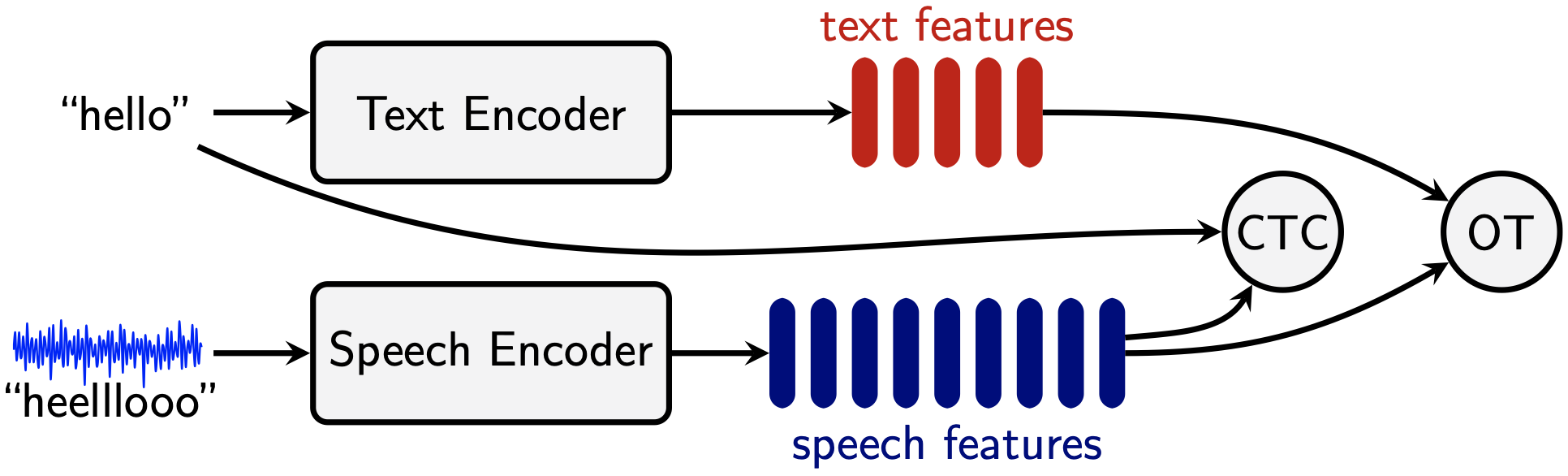

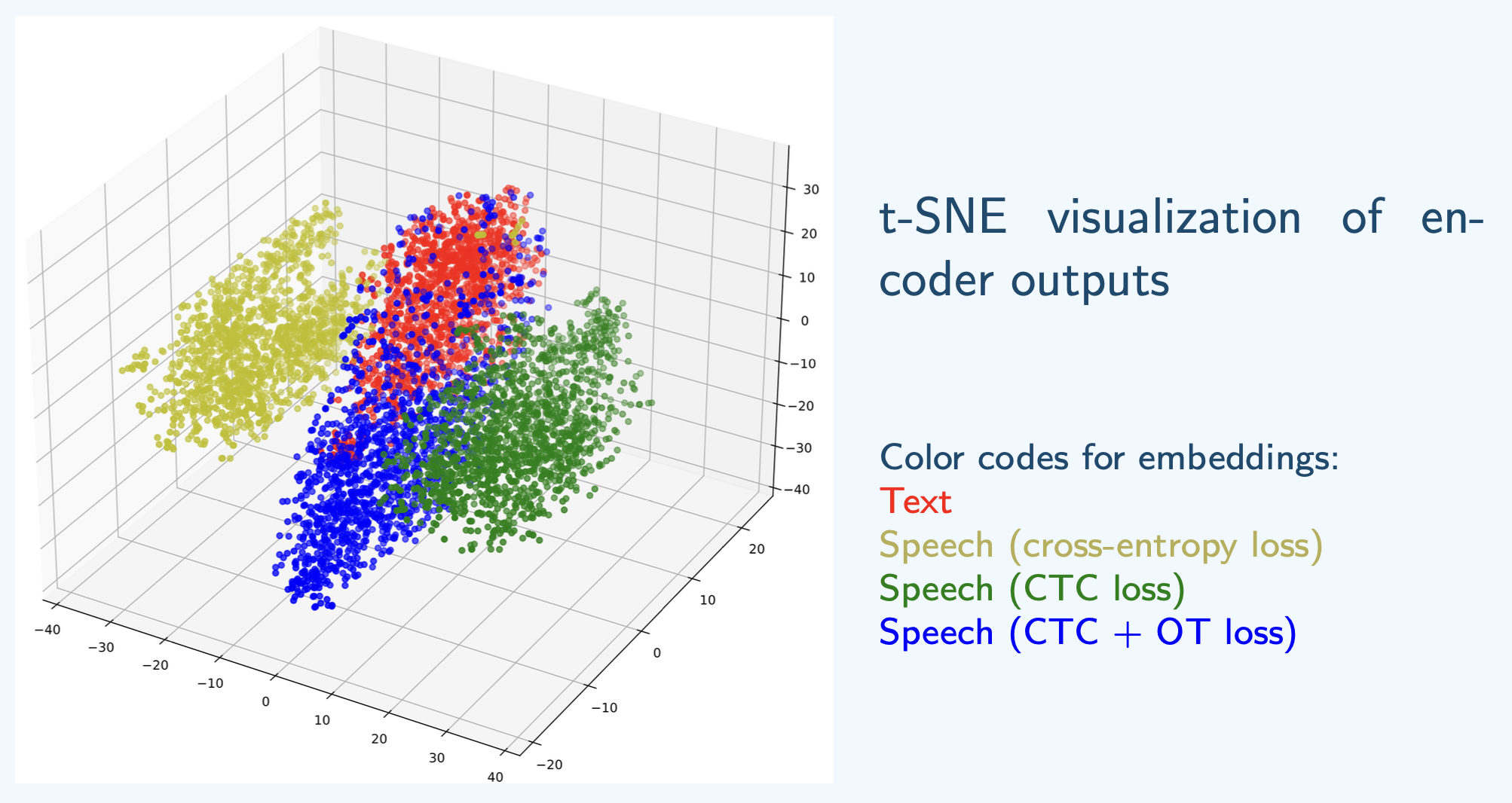

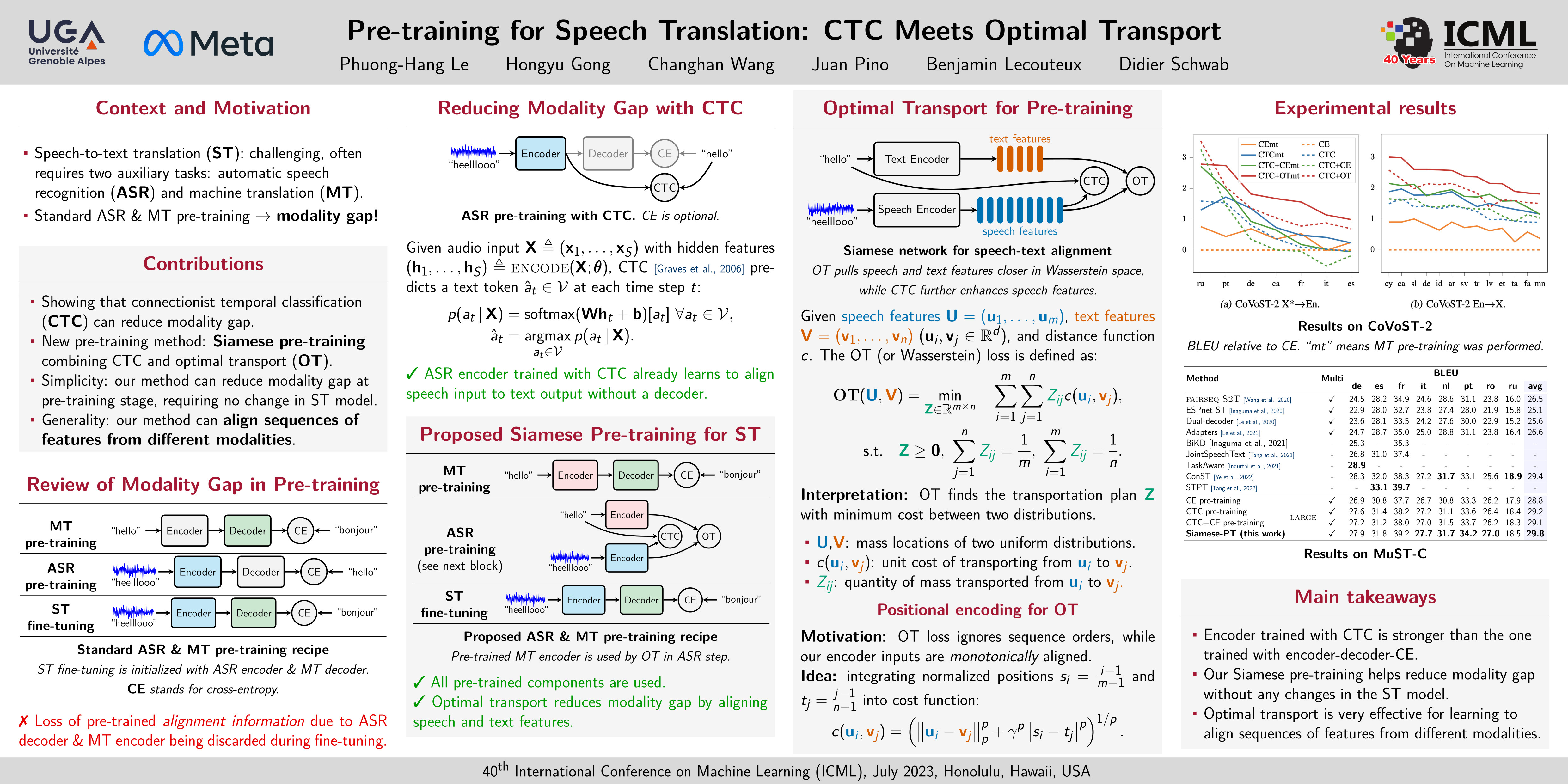

The gap between speech and text modalities is a major challenge in speech-to-text translation (ST). Different methods have been proposed to reduce this gap, but most of them require architectural changes in ST training. In this work, we propose to mitigate this issue at the pre-training stage, requiring no change in the ST model. First, we show that the connectionist temporal classification (CTC) loss can reduce the modality gap by design. We provide a quantitative comparison with the more common cross-entropy loss, showing that pre-training with CTC consistently achieves better final ST accuracy. Nevertheless, CTC is only a partial solution and thus, in our second contribution, we propose a novel pre-training method combining CTC and optimal transport to further reduce this gap. Our method pre-trains a Siamese-like model composed of two encoders, one for acoustic inputs and the other for textual inputs, such that they produce representations that are close to each other in the Wasserstein space. Extensive experiments on the standard CoVoST-2 and MuST-C datasets show that our pre-training method applied to the vanilla encoder-decoder Transformer achieves state-of-the-art performance under the no-external-data setting, and performs on par with recent strong multi-task learning systems trained with external data. Finally, our method can also be applied on top of these multi-task systems, leading to further improvements for these models.

Poster

Citation

@inproceedings{le2023pretraining,

author = {Le, Phuong-Hang and

Gong, Hongyu and

Wang, Changhan and

Pino, Juan and

Lecouteux, Benjamin and

Schwab, Didier},

title = {Pre-training for Speech Translation: CTC Meets Optimal Transport},

booktitle = {Proceedings of the 40th International Conference on Machine Learning (ICML)},

year = {2023}

}